[Virtual Event] Agentic AI Streamposium: Learn to Build Real-Time AI Agents & Apps | Register

Reflections on Event Streaming as Confluent Turns Five – Part 2

When people ask me the very top-level question “why do people use Kafka,” I usually lead with the story in my last post, where I talked about how Apache Kafka® is helping us deliver on the promises the cloud made to us a decade ago. But I follow it up quickly with a second and potentially unrelated pattern: real-time data pipelines. These provide a different set of motivations for using an event streaming platform than scaling and microservices: specifically, the need to produce analytics results and business insights faster than the next day, which has been the tradition most of us have received since early on in our careers.

If it’s not real-time data, it’s old data

When I was a younger developer (well, when I was a younger developer, I was writing firmware on small microcontrollers whose “database” consisted of 200 bytes of RAM, but stick with me here)—relational databases had only recently become mature and stable data infrastructure platforms. Around that same time, the disciplines, tooling, and consulting companies that would come to define business analytics were just being formed. The pattern was that every night, your ETL process would dump that day’s transactional activity into your newfangled analytic data warehouse.

It wasn’t just the best we could do; it was a revolutionary achievement that brought powerful new insights into the hands of business leaders faster than they had ever had them before. This was in the days in which “please allow four to six weeks for delivery” was still a recent echo in the air, and next-day analytics sounded really fast. Batch was good enough.

Until it wasn’t. Business is much more globalized than it was 30 years ago, and a nightly cadence doesn’t make as much sense when it’s not clear when “night” is for a global enterprise. Consumer expectations have also shifted dramatically from the era of four-to-six-weeks to “wait, that’s not on Prime?” In other words, working with yesterday’s data just might not be possible. You are probably being asked to deliver more than that.

You see signs of this tension in shrinking ETL batch times: overnight was the original gold standard, then data architects figured out how to run batches hourly, then they started trying 15-minute batches, and so on. This is a nice evolutionary trajectory, but eventually it shows signs of a strained paradigm. And the last thing you want is to be responsible for delivering on executives’ demands when the tools you have available to you are under stress.

Batch vs. real-time streams of data

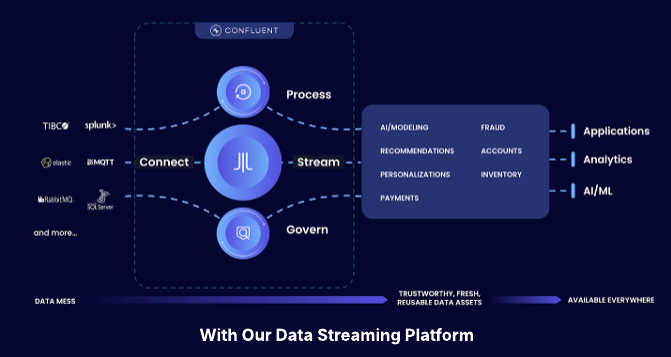

So, businesses need data-driven insights based on things that are happening right now, and that’s where real-time data pipelines come in. Whereas it’s practically impossible to pull data from a database and cut your batch times down to minutes, once you have an event streaming platform in place, it is relatively trivial to get those results in seconds.

Perhaps the easiest-to-understand use case here is fraud detection. It’s just not valuable to know that fraud took place in a transaction you cleared yesterday; maybe you can provide the DA with evidence months down the line, but your money is gone. Instead, you need to know that it’s happening right now, inside the loop of the transaction clearing process itself, so you can refuse the transaction in real time. This has to be real time. Why? Because on the other end of this transaction, there is a human who needs immediate confirmation of a trade being made or that a transaction has successfully gone through. Industry heavyweights like Capital One use event streaming on Kafka for this very task.

Of course, there are countless uses for real-time data pipelines beyond fraud detection in the finance and retail industries. For example, the software stack of prescription benefits provider Express Scripts was originally built around a mainframe. And hey, plenty of things are, and sometimes they work just fine. However, mainframes can be costly, and often do not lend themselves to architectures that are later on described as being “agile” or “low latency” or other things we normally like. Accordingly, Express Scripts has refactored its data architecture to a low-latency data pipeline based on the Confluent Platform. They’re a great example of a business that couldn’t easily remain competitive using technology paradigms of decades past. They responded appropriately, and are reaping the benefits.

Many more examples are coming to light every day, and if you’d like to learn more about how to build this kind of thing and how real-time pipelines fit into broader business concerns, I can’t think of a better use of your time—if you will pardon a moment of promotion—than attending Kafka Summit, where Capital One and Express Scripts shared their stories last year, and where many more developers are set to share their experiences in a few weeks.

If you’re convinced but your boss isn’t, I’ve got you covered. I hope to see you there.

Other articles in this series

Avez-vous aimé cet article de blog ? Partagez-le !

Abonnez-vous au blog Confluent

Confluent Cloud for Government Achieves FedRAMP Moderate: Mission-Ready Data Streaming for Federal Agencies

Confluent Cloud for Government has achieved FedRAMP Moderate authorization, allowing federal, state, and local agencies to deploy secure, fully managed data streaming. By eliminating the operational burden of self-managed Kafka, the platform helps agencies break down data silos, modernize legacy...

Confluent Recognized in 2025 Gartner® Magic Quadrant™ for Data Integration Tools

Confluent is recognized in the 2025 Gartner Data Integration Tools MQ. While valued for execution, we are running a different race. Learn how we are defining the data streaming platform category with our Apache Flink® service and Tableflow to power the modern real-time enterprise.